How Much Have You Spent on Amazon? Analyzing Amazon Data

How much money have I spent on Amazon? — That's a question that will interest, and possibly terrify, longtime customers of the ecommerce giant. But if you want the answer, it is available. You can find out how much you've spent on Amazon, and quite a bit more.

Let's walk through how.

We're going to analyze our Amazon data using a little Python programming. But if you've never coded anything before, don't worry! We're going to walk through it all together. By the end of this article, you'll have written fewer than thirty lines of code, and you'll have done some cool things like:

- Figured out the total amount of money you've spent on Amazon, ever.

- Found your most and least expensive orders, and your average and median order totals.

- Figured out how much you've paid in taxes, and your effective sales tax rate on Amazon.

- Visualized your spending habits day-by-day in a bar graph.

- Customized some elements of the bar graph, like size and colors.

If you've ever thought about learning a little code, or if you've ever wanted to know how much you've spent on Amazon, come along with us! You'll be amazed at what you can do (although you might be horrified by how much you've spent).

Getting Ready For the Analysis

1. Downloading and Installing The Tools

To do this analysis, we're going to do a little Python programming. But don't panic! Even if you've never written a line of code in your life, you can do this! We'll walk through the process step by step.

The first step is to get a tool called Anaconda installed on your computer. This will allow us to do our programming using something called Jupyter Notebooks. Jupyter Notebooks is a great tool for doing data analysis projects like this, and once you get the hang of it, you may find yourself using it a lot.

We have an in-depth tutorial on Jupyter Notebooks if you'd like to go deeper, but today what we'll be doing is pretty straightforward, and we'll walk through it all right on this page.

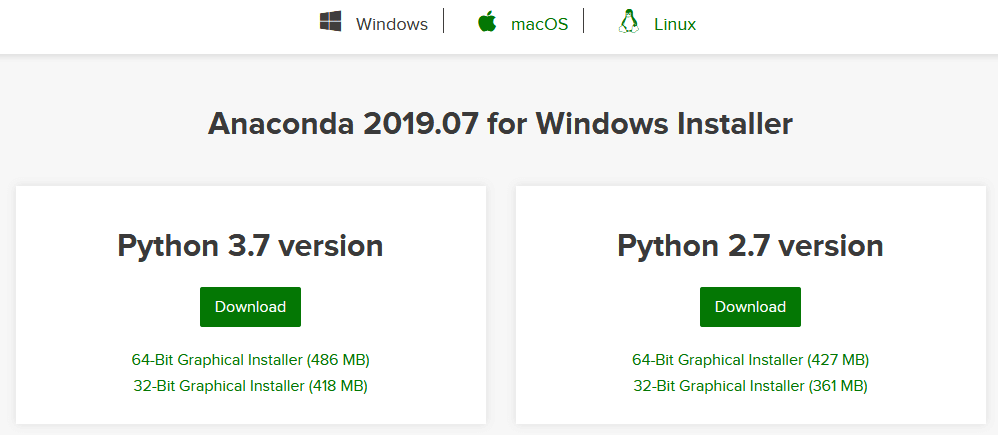

First, we'll need to head to this page on the Anaconda website, scroll down slightly, and then choose our operating system — Windows, Mac, or Linux. Once we've selected the right operating system you're using, we'll click on the green "Download" button under Python 3.7 Version to download Anaconda.

Once the download is finished, we can install it the same way we would any other app or program: just double-click on the downloaded file and a setup wizard will pop-up to guide us through the installation process.

For our purposes here, the default installation settings will be fine. We'll want to note the folder we're installing it in, though. Although we can save and access files from anywhere on the computer within Jupyter Notebooks, today we'll be saving our data set and our Jupyter Notebook file into this same folder for easy access.

Once Anaconda is installed, we can proceed to the next step:

Downloading Amazon Order History Data

Amazon allows you to download pretty extensive reports on the orders you've made, although the available data only dates back to 2006. Still, if you're a regular Amazon user, more than a decade of data is likely to contain some interesting insights!

To get the data, clicking on this link while logged in should take us directly to the order reports download page. But if that doesn't work, we can also navigate to that page directly: go to Amazon.com and click the Accounts and Lists button in the top right. On the next page, look for the Ordering and Shopping Preferences section, and click on the link under that heading that says "Download order reports".

If you'd like to work through this tutorial but would prefer not to use your own data, you can download the same anonymized data set we're using for this tutorial.

Amazon offers four different report types. For now, we're going to download an Orders and shipments report, so select that option from the drop-down menu. Then, we can choose a start date and end date for the data we'd like to look at. To see all of Amazon's stored data, we'll need to set the Start Date to January 1, 2006. Then, we can click the little "Use today" button next to the End Date to automatically fill in today's date.

(If we wanted to look at less than all of our order history, there are also "Quick Set Options" on the right hand side of the page that we can use to quickly input common time periods we might want to look at, like last year, or the most recent month. But if we're trying to find out how much we've spent on Amazon in total, then we'll need to download everything from 2006 to today.)

We can also choose to add a name to the report — this isn't required and it's fine to leave it blank, but it may be worth doing if you plan to download lots of different reports and want to make it easy to tell them apart.

Once everything is ready, click "Request Report" and Amazon will begin building the report for you. The more data you're asking for and the more orders you've made, the longer this process could take! But within a minute or two, we should get a download message pop up for a .csv file. This is the report, so click "Save" to download it.

(If you don't get the pop-up prompt, you can also download it by clicking "Download" in the "Actions" section of the "Your Reports" table that's just below the "Request Order History Report" form on that same page.)

Renaming and Moving the Amazon Order Data

We're almost ready to dive into a little programming and do our analysis. But first, let's make things a little easier by renaming and moving that file we just downloaded. By default, this will probably be called something like 01_Jan_2006_to_10_Sept_2019.csv, but let's rename it something simpler: amazon-orders.csv.

Next, we'll move the amazon-orders.csv file to the same folder we installed Anaconda in so that we can access it easily from Jupyter Notebooks. If you didn't change the default folder during the installation process, this will be C:/Users/YourUsername/ on Windows.

Open a Jupyter Notebook

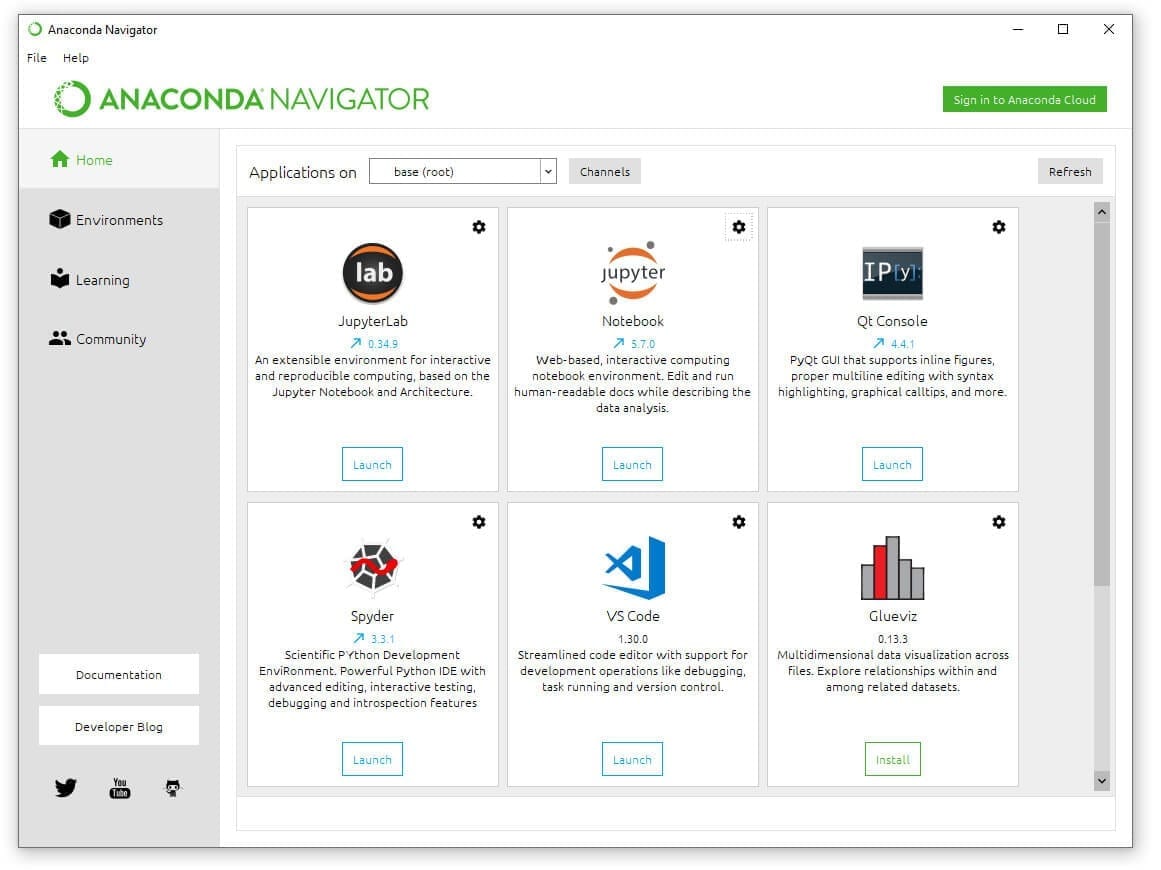

Now we're finally getting to the fun part! Open the Anaconda Navigator app. You can find this by searching for "Anaconda Navigator" on your computer. On Windows, you can also find it in the Start Menu, and on Mac it should be in your Applications folder. Once it's open, you'll see a screen that looks like this:

Click the "Launch" button under Jupyter Notebook. That will open a new screen in your browser. On the top right of that screen click "New" and then under "Notebook" in the drop-down menu, click "Python 3".

Voila! You've opened a blank Jupyter Notebook and we're ready to begin our analysis!

How Much Have I Spent on Amazon?

Jupyter Notebooks allows us to write and run little snippets of code in cells. On the screen, below the menu and buttons, you'll see a little block that says In [ ]: with a blinking cursor. This is the first cell, and it's where we can write our code.

For this analysis, we'll be using Python, a very versatile programming language that's popular for data analysis. We'll also be using Python packages called pandas and matplotlib. You can think of packages as sort of like browser plug-ins — they're tools that help extend the capabilities of regular Python. Pandas and matplotlib are already installed with Anaconda, so we don't need to download or install them ourselves.

Getting the Data into Pandas

Our first step will be to write the code to open and look at our Amazon data, so that we can see what we're working with. We'll be using pandas for most of our analysis, so our first step will be to import the pandas package and give it the nickname pd so that we can refer to it more easily in our code.

Next, we'll create a new variable called df, short for DataFrame, and then tell pandas to store our Amazon data in in that variable. A DataFrame is basically just the pandas name for a table. What we're really doing here is storing our data in a table format.

To do that, we need to tell pandas to read the .csv. file as a Dataframe. We'll use pd.read_csv('file_name.csv') for this. Let's break that down piece by piece:

pdtells Python to use pandas to do this operation.read_csv()tells pandas to read a csv file and store it as a DataFrame- The

'file_name.csv'part inside the parentheses tells pandas to read a csv file with that filename, in the same folder where Anaconda is installed.

(If we wanted to get a CSV file from a different folder, we'd have to put the full file path, like C:/Users/Username/file_name.csv. Since we stored our amazon-orders.csv file in the same folder we installed Anaconda, so we only need to include the file name here.)

Finally, we'll use a pandas function called .head() to look at the first five rows our our data, which will give us a taste of what we're working with, and what kinds of data Amazon's order history includes. For this, we use the syntax DataFrame.head(), so since our DataFrame is stored in the variable df, we'll use df.head().

We'll be using .head() a lot in this tutorial — it's a great quick way to double-check that our code has made the changes we think it has made, at least when we're making changes that will be evident from visually looking at the table.

Here's how it looks all put together. Let's input this code into our first Jupyter Notebook cell, and then hit the "Run" button, or use Shift + Enter (Cmd + Enter on Mac) on the keyboard to run the selected cell.

import pandas as pd

df = pd.read_csv('amazon-orders.csv')

df.head()

| Order Date | Order ID | Payment Instrument Type | Website | Purchase Order Number | Ordering Customer Email | Shipment Date | Shipping Address Name | Shipping Address Street 1 | Shipping Address Street 2 | ... | Order Status | Carrier Name & Tracking Number | Subtotal | Shipping Charge | Tax Before Promotions | Total Promotions | Tax Charged | Total Charged | Buyer Name | Group Name | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 04/01/19 | 112-0000000-0000001 | Visa - 0001 | Amazon.com | NaN | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | NaN | ... | Shipped | USPS(0001) | $35.00 | $0.00 | $1.93 | $0.00 | $1.93 | $36.93 | Your Name | NaN |

| 1 | 04/01/19 | 112-0000000-0000002 | Visa - 0001 | Amazon.com | NaN | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | NaN | ... | Shipped | USPS(0001) | $16.99 | $0.00 | $0.00 | $0.00 | $0.00 | $16.99 | Your Name | NaN |

| 2 | 04/01/19 | 112-0000000-0000003 | Visa - 0001 | Amazon.com | NaN | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | NaN | ... | Shipped | USPS(0001) | $9.99 | $0.00 | $0.00 | $0.00 | $0.00 | $9.99 | Your Name | NaN |

| 3 | 04/04/19 | 112-0000000-0000004 | Visa - 0001 | Amazon.com | NaN | [email protected] | 04/05/19 | Your Name | 1 YOUR STREET | NaN | ... | Shipped | UPS(0002) | $147.98 | $0.00 | $8.14 | $0.00 | $8.14 | $156.12 | Your Name | NaN |

| 4 | 04/05/19 | 112-0000000-0000005 | Visa - 0001 | Amazon.com | NaN | [email protected] | 04/07/19 | Your Name | 1 YOUR STREET | NaN | ... | Shipped | USPS(0001) | $14.99 | $0.00 | $0.00 | $0.00 | $0.00 | $14.99 | Your Name | NaN |

Cool! With just three lines of code, we've imported all of our data, and now we're able to see a preview of it in convenient table format.

Cool! With just three lines of code, we've imported all of our data, and now we're able to see a preview of it in convenient table format.

If we want to get a readout of the full size of our data set, we can use df.shape. This gives us the dimensions of our DataFrame.

df.shape

(59, 23)

Now we know that our DataFrame has 59 rows and 23 columns. (We're using a pretty small data set for this tutorial; your DataFrame will probably be a lot bigger!)

Looking at the table above, the first thing you'll notice is probably that it's fake data. The data set we're using in this tutorial is an edited version of some of my own Amazon purchase history. I've changed names, addresses, card numbers, and purchase prices to protect my privacy, but this data is in the exact same format as the real data you'll download from Amazon, and the code that we use on this anonymized data will work on your real data, too — no matter how much of it you have.

The column names that Amazon's data comes with by default are pretty descriptive, and it's clear that there are interesting things to look at in this data set. Our first goal will be to figure out how much we've spent in total, and calculate our average price per order. That means the data in the Total Charged column is definitely going to be important for us.

Looking at the above, we may also notice that some columns have the value NaN. This is a null value; NaN is the way that pandas represents the absence of data. When we're doing our analysis, we'll have to be conscious of these missing values in our data set.

Cleaning the Data

Cleaning the data is an important part of most data analysis projects, and we'll need to do a little bit of data cleaning here before we can do our analysis and find out the total sum we've spent on Amazon.

Let's start by dealing with those NaN values. We need to do some math with our data, but 1 + NaN is a tough math problem to solve. NaN, after all, isn't a number! Since NaN represents the absence of information, let's just fill in all of those NaN values using pandas's handy df.fillna() function. This allows us to automatically replace every NaN value with something else. In this case, let's replace NaN with the number zero.

We need to be a little careful here, though. We don't just want to replace those values temporarily! We want to replace them and then store that version of the DataFrame as our new DataFrame. We'll use df = df.fillna(0) to replace the values and re-define our df variable to point to that new dataframe.

Then, we'll use the .head() function again to make sure our changes worked.

df = df.fillna(0)

df.head()

| Order Date | Order ID | Payment Instrument Type | Website | Purchase Order Number | Ordering Customer Email | Shipment Date | Shipping Address Name | Shipping Address Street 1 | Shipping Address Street 2 | ... | Order Status | Carrier Name & Tracking Number | Subtotal | Shipping Charge | Tax Before Promotions | Total Promotions | Tax Charged | Total Charged | Buyer Name | Group Name | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 04/01/19 | 112-0000000-0000001 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $35.00 | $0.00 | $1.93 | $0.00 | $1.93 | $36.93 | Your Name | 0.0 |

| 1 | 04/01/19 | 112-0000000-0000002 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $16.99 | $0.00 | $0.00 | $0.00 | $0.00 | $16.99 | Your Name | 0.0 |

| 2 | 04/01/19 | 112-0000000-0000003 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $9.99 | $0.00 | $0.00 | $0.00 | $0.00 | $9.99 | Your Name | 0.0 |

| 3 | 04/04/19 | 112-0000000-0000004 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/05/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | UPS(0002) | $147.98 | $0.00 | $8.14 | $0.00 | $8.14 | $156.12 | Your Name | 0.0 |

| 4 | 04/05/19 | 112-0000000-0000005 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/07/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $14.99 | $0.00 | $0.00 | $0.00 | $0.00 | $14.99 | Your Name | 0.0 |

Great! As we can see, our null values have all been replaced with zeroes, which is going to make doing calculations easier.

Great! As we can see, our null values have all been replaced with zeroes, which is going to make doing calculations easier.

We still have a little data cleaning problem, though. The prices in this data set have been stored as strings, which means the computer is seeing them as a series of characters, rather than numbers that it can add together. They also include the character $, which isn't a number.

Before we can perform calculations with these values, we need to remove those dollar signs, and then we need to turn the string values into floats — a numeric data type that Python can easily perform calculations on. Thankfully, these operations will require just a few more lines of code.

Specifically, there are two pandas functions we can use. The first, Series.str.replace() allows us to replace one character or set of characters with another in any column (called a Series in pandas) of a dataframe. For our purposes, this is helpful because we can use the syntax df["Total Charged"].str.replace('$','') to tell Python to replace what's inside of the first set of '' ($) that's in the "Total Charged" column with what's inside of the second set (nothing).

In other words, we're telling pandas to replace the character $ with nothing in that column. That way, all that will be left is the numbers. Once we just have numbers in the column, we can use the function .astype(float) to convert each entry in the column into the float type so that we can do our math.

Once again, we don't want to make these changes temporarily, we want to modify our DataFrame and then point our df variable to that new version of the DataFrame. However, since this time we're only modifying one column of the data, we'll use df["Total Charged"] to specify that we only want to make these changes in that column.

Now, let's put it all together, and then once again use head() to check our work on the next line.

df["Total Charged"] = df["Total Charged"].str.replace('$','').astype(float)

df.head()

| Order Date | Order ID | Payment Instrument Type | Website | Purchase Order Number | Ordering Customer Email | Shipment Date | Shipping Address Name | Shipping Address Street 1 | Shipping Address Street 2 | ... | Order Status | Carrier Name & Tracking Number | Subtotal | Shipping Charge | Tax Before Promotions | Total Promotions | Tax Charged | Total Charged | Buyer Name | Group Name | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 04/01/19 | 112-0000000-0000001 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $35.00 | $0.00 | $1.93 | $0.00 | $1.93 | 36.93 | Your Name | 0.0 |

| 1 | 04/01/19 | 112-0000000-0000002 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $16.99 | $0.00 | $0.00 | $0.00 | $0.00 | 16.99 | Your Name | 0.0 |

| 2 | 04/01/19 | 112-0000000-0000003 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $9.99 | $0.00 | $0.00 | $0.00 | $0.00 | 9.99 | Your Name | 0.0 |

| 3 | 04/04/19 | 112-0000000-0000004 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/05/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | UPS(0002) | $147.98 | $0.00 | $8.14 | $0.00 | $8.14 | 156.12 | Your Name | 0.0 |

| 4 | 04/05/19 | 112-0000000-0000005 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/07/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $14.99 | $0.00 | $0.00 | $0.00 | $0.00 | 14.99 | Your Name | 0.0 |

Scroll over to the right in this table and you can see that we've done it: the dollar signs are all gone. Now it's time for the fun part, or perhaps the scary part: finding out how much we've actually spent on Amazon over the years!

Scroll over to the right in this table and you can see that we've done it: the dollar signs are all gone. Now it's time for the fun part, or perhaps the scary part: finding out how much we've actually spent on Amazon over the years!

Calculating the Total Amount of Money Spent on Amazon

Pandas includes some basic math functions that we can use on this column. Let's start with .sum(), which will give us the total of every number in this column — in other words, the total we've spent on Amazon.

df["Total Charged"].sum()

1777.7300000000002

There it is: $1,777.73. That's how much money I have spent on Amazon, at least within the confines of this limited and edited data set.

I don't want to share the real total number I found when I analyzed the full 13 years of order history Amazon has for me, but it was...bigger. Did your total surprise you?

Calculating Other Things: Average Spend on Amazon, Biggest Purchase, Etc.

Finding the total isn't the only thing we can do now that we've got that column of data all cleaned up! Pandas allows us to very quickly make some other calculations. For example, what was the average purchase price? We can use .mean() to find out:

df["Total Charged"].mean()

30.131016949152542

My average spend per order, apparently, is about $30.

Since looking at the mean/average can sometimes hide outliers, it's nice to check out the median as well. We can use .median() to do that.

df["Total Charged"].median()

15.95

That's interesting! My median spend is quite a bit lower than my average spend. Looks like I made a few expensive orders. Let's see what the highest-priced order was by using max():

df["Total Charged"].max()

210.99

OK, my priciest order was $211. What about my lowest-priced order? We can use .min() to find that one:

df["Total Charged"].min()

1.04

What on earth did I buy on Amazon that only cost $1? I can't remember! (I could find that out in a different Amazon report, the Items report, but that's bit more complicated and thus a topic for another time.)

How Much Have I Paid in Sales Tax?

That's a lot of interesting analysis of the total price I paid for each order, but what if I wanted to look into a different column? For example, what if I wanted to see how much I've paid in sales tax?

That data's stored in the Tax Charged column. This column hasn't been cleaned yet, so it has the same problems that the Total Charged column did when we started.

But here's the beauty of programming: we've already written the code that can fix that. All we need to do is copy-paste our column-cleaning code from above, and replace "Total Charged" with "Tax Charged" to tell Python to perform those same operations on the "Tax Charged" column.

df["Tax Charged"] = df["Tax Charged"].str.replace('$','').astype(float)

df.head()

| Order Date | Order ID | Payment Instrument Type | Website | Purchase Order Number | Ordering Customer Email | Shipment Date | Shipping Address Name | Shipping Address Street 1 | Shipping Address Street 2 | ... | Order Status | Carrier Name & Tracking Number | Subtotal | Shipping Charge | Tax Before Promotions | Total Promotions | Tax Charged | Total Charged | Buyer Name | Group Name | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 04/01/19 | 112-0000000-0000001 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $35.00 | $0.00 | $1.93 | $0.00 | 1.93 | 36.93 | Your Name | 0.0 |

| 1 | 04/01/19 | 112-0000000-0000002 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $16.99 | $0.00 | $0.00 | $0.00 | 0.00 | 16.99 | Your Name | 0.0 |

| 2 | 04/01/19 | 112-0000000-0000003 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $9.99 | $0.00 | $0.00 | $0.00 | 0.00 | 9.99 | Your Name | 0.0 |

| 3 | 04/04/19 | 112-0000000-0000004 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/05/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | UPS(0002) | $147.98 | $0.00 | $8.14 | $0.00 | 8.14 | 156.12 | Your Name | 0.0 |

| 4 | 04/05/19 | 112-0000000-0000005 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/07/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $14.99 | $0.00 | $0.00 | $0.00 | 0.00 | 14.99 | Your Name | 0.0 |

Presto, that column is now ready for any sort of analysis we'd like to do. For example, what's the total amount of money we've paid in tax, according to this data set? We'll use

Presto, that column is now ready for any sort of analysis we'd like to do. For example, what's the total amount of money we've paid in tax, according to this data set? We'll use .sum() again to find out:

df["Tax Charged"].sum()

52.60999999999999

Apparently, about $52.61 of the total money I spent on Amazon went towards taxes. The tax rate on different items varies — we can see in the DataFrame preview above that some items did not charge any tax at all. But if we wanted to, we could figure out the overall tax rate by simply dividing our "Total Charged" sum from our "Tax Charged" sum, like so:

df["Tax Charged"].sum() / df["Total Charged"].sum()

0.02959392033660904

During this time period, I paid an overall effective sales tax rate of about 2.9

So far, the analysis we've done here is pretty cool, and we've learned something about our Amazon spending habits. If you've never written any code before and you'd like to learn more of this sort of thing, I'd recommend you stop here and sign up for our Python for Data Science Fundamentals course. It's free, and it will give you a great foundation in the fundamentals so that soon, you'll feel comfortable taking on projects like this, and even more complicated ones, totally on your own.

If you'd like a bit more of a challenge right now, though, there's a lot more we can do with this data set. Let's try to get a picture of how our spending habits have changed over time by doing some analysis that involves those date columns!

Analyzing Amazon Spending Over Time

Before we can work with dates in Python, we need to convert them to a datetime data type, so that the computer can recognize them as dates. Luckily, with pandas this is quite straightforward! We can use .pd.to_datetime() and specify the column we want to modify within those brackets to tell pandas to read that column as dates.

As before, we want to make sure we're storing this change in our df dataframe, so we'll specify that we're modifying the df['Order Date'] column, and then assign that to the "Order Date" column in our df variable so that the old "Order Date" column is replaced with our changed version.

We really only need to see one row to make sure our dates have changed here, so let's feed a custom argument to our old friend df.head(). We can customize the number of rows it displays. If we put the number 1 inside those parentheses, it will display one row of the data set.

df['Order Date'] = pd.to_datetime(df['Order Date'])

df.head()

| Order Date | Order ID | Payment Instrument Type | Website | Purchase Order Number | Ordering Customer Email | Shipment Date | Shipping Address Name | Shipping Address Street 1 | Shipping Address Street 2 | ... | Order Status | Carrier Name & Tracking Number | Subtotal | Shipping Charge | Tax Before Promotions | Total Promotions | Tax Charged | Total Charged | Buyer Name | Group Name | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 2019-04-01 | 112-0000000-0000001 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $35.00 | $0.00 | $1.93 | $0.00 | 1.93 | 36.93 | Your Name | 0.0 |

| 1 | 2019-04-01 | 112-0000000-0000002 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $16.99 | $0.00 | $0.00 | $0.00 | 0.00 | 16.99 | Your Name | 0.0 |

| 2 | 2019-04-01 | 112-0000000-0000003 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/01/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $9.99 | $0.00 | $0.00 | $0.00 | 0.00 | 9.99 | Your Name | 0.0 |

| 3 | 2019-04-04 | 112-0000000-0000004 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/05/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | UPS(0002) | $147.98 | $0.00 | $8.14 | $0.00 | 8.14 | 156.12 | Your Name | 0.0 |

| 4 | 2019-04-05 | 112-0000000-0000005 | Visa - 0001 | Amazon.com | 0.0 | [email protected] | 04/07/19 | Your Name | 1 YOUR STREET | 0.0 | ... | Shipped | USPS(0001) | $14.99 | $0.00 | $0.00 | $0.00 | 0.00 | 14.99 | Your Name | 0.0 |

From that one row, we can see that the date formatting has changed from

From that one row, we can see that the date formatting has changed from 2019/04/01 to 2019-04-01. That's a good sign that our change to datetime has worked, and we can proceed to the next step of our analysis.

One thing that might be interesting to look at is how much was spent on each particular day in this data set. That's an analysis that would be easiest to look at visually, perhaps in the form of a bar graph. Luckily, the pandas and matplotlib packages make it possible for us to build and view a variety of chart types right inside a Jupyter Notebook!

We'll start with a little Jupyter Notebooks magic that will allow us to display charts in our noteboot. (Note: when you run this code cell, nothing will visibly change, but it will enable matplotlib to display charts in your notebook.)

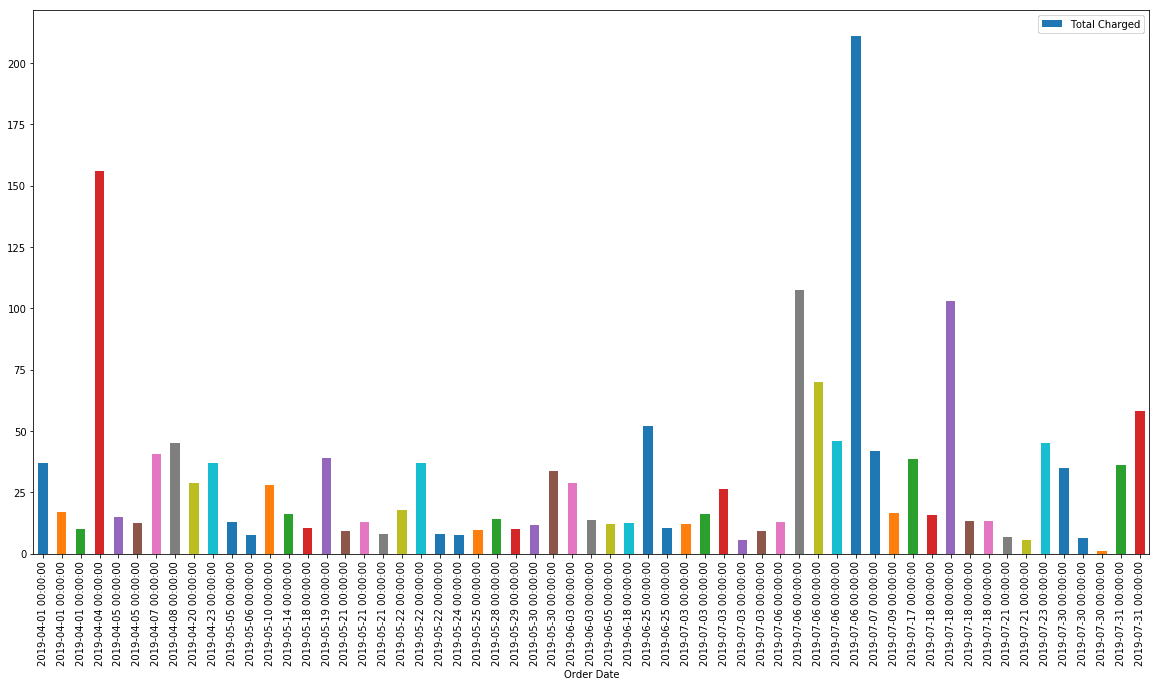

Great! We can make a basic bar graph in a pretty straightforward way using df.plot.bar(). We can pass the desired X and Y axis columns to this function as arguments, and we can add other arguments as well to do things like rotate the x-axis labels. but take a look at what happens when we chart our dataframe as-is:

df.plot.bar(x='Order Date', y='Total Charged', rot=90)

<matplotlib.axes._subplots.AxesSubplot at 0x1c30f9c4e10>

That chart is a little small, and it's hard to see whether we've really gotten what we want. Let's make it bigger so that we can read the data more easily.

We can do that by adding an argument to our plotting code called figsize. This allows us to define the size of the graph (fig is short for figure) by width and height. The units it uses aren't particularly intuitive, but (20, 10) is a good default to start from for most charts, and then we can adjust further if needed.

All we need to do is add figsize=(20,10) to the arguments we already have in our code. Note that each separate argument is separated with a comma.

df.plot.bar(x='Order Date', y='Total Charged', rot=90, figsize=(20,10))

<matplotlib.axes._subplots.AxesSubplot at 0x1c3129870b8>

That's easier to read! But if we take a close look at the chart above, we can spot a problem: when multiple orders were placed on the same day, each order is getting a separate bar in the bar chart. Look, for example, at the first three bars on the chart — all three of them are for April 1, representing three separate orders placed that day.

That's not really what we were trying to visualize. We want to see how much was spent each day. Each bar should represent a day, not a single order, so if there was more than one order on that day, the totals charged for those orders should be added together.

We can do that using another built-in pandas function: df.groupby().

The syntax for this is a little bit complex, but we can break what we want to do down into two parts: 1. We want to group our data by 'Order Date', so that all the orders that share the same date are counted in a single row (i.e., we want one row for April 1, rather than three). 2. We want to get the sum of the prices paid in 'Total Charged' column for each date and make it the value for 'Total Charged' in the relevant date's row (i.e., we want the value of 'Total Charged' in the April 1 row to be the sum total of the three orders placed that day).

Since we're grouping by the order date, we'll start with df.groupby('Order Date'). The next thing we need to do is take the sum of the 'Total Charged' column in that group and set the make that our new 'Total Charged' column, so we'll follow the first part with .sum()["Total Charged"].

We want to assign the result of that, which will be a pandas Series, to a new variable. Let's call it daily_orders.

Here's what it looks like all together:

daily_orders = df.groupby('Order Date').sum()["Total Charged"]

daily_orders.head()

Order Date

2019-04-01 63.91

2019-04-04 156.12

2019-04-05 27.64

2019-04-07 40.63

2019-04-08 44.99

Name: Total Charged, dtype: float64

As we can see, the results of .head() look a bit different here. That's because this is a Series rather than a DataFrame. It looks like it has two columns, but it actually only has one: the 'Order Date' column entries are actually the index labels for our sums.

Understanding precisely how this works isn't important for this tutorial, but if you scroll up to one of your earlier df.head() printouts, you may notice that along the far left side of the table are descending numbers: 0, 1, 2, 3, 4... These numbers are index labels.

Thankfully, pandas is well equipped to plot a Series with dates as index labels, so we don't have to do anything else with our data. And if we check the numbers in our earlier DataFrame, we can see that our sums here are correct. April 1, 2019 had three separate orders, \$36.93, \$16.99, and \$9.99. The sum of these three is \$63.91, which is the value for April 1 in daily_orders. Perfect!

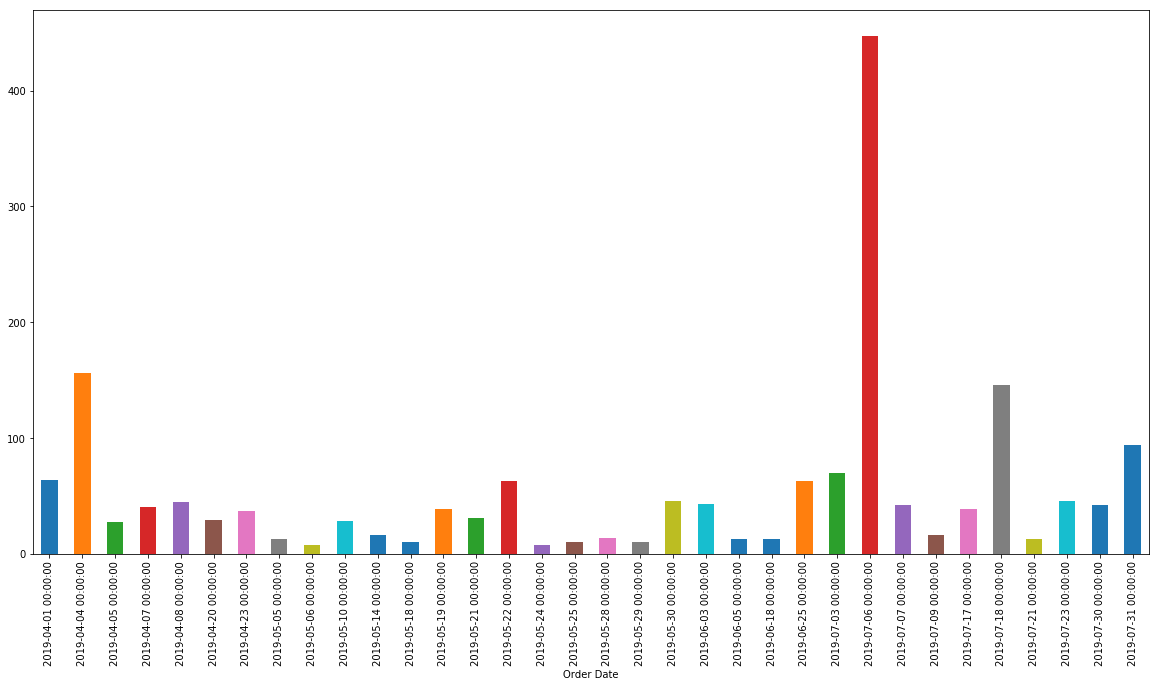

It's time to make it into a bar graph. We can do that with Series.plot.bar(), and since our series only has one column and one set of index labels, we don't even need to define our x and y axes. Pandas will do that automatically.

daily_orders.plot.bar(figsize=(20,10))

<matplotlib.axes._subplots.AxesSubplot at 0x1c3110bbf60>

Now we've got what we want being charted, but there's another quick improvement we can make.

The different colors in this chart are kind of misleading. Each bar actually represents the value for the same thing (the total Amazon charged us for orders on that day). But because each bar is a different color, someone looking at this chart might assume the colors have some meaning and that each bar represents something different.

We can change this with another argument like figsize. This one is called color. For example, adding the argument color="blue" to our plot code would make every bar in the chart blue.

This argument can accept basic colors like "blue", but it can also accept color information in other formats, including the hex codes you've probably seen used on the web. Instead of blue, let's make our chart a more interesting color: Dataquest green! The hex code for our green is #61D199, so we'll use color='#61D199'.

Let's put that into our code and see how it looks!

daily_orders.plot.bar(figsize=(20, 10), color='#61D199')

<matplotlib.axes._subplots.AxesSubplot at 0x1c311dcd5c0>

Awesome! This chart shows us something our previous analysis hasn't: although the biggest single order was just over \$200, there was a single day in July where several orders totaled up to well over \$400.

Clearly, there are still a lot of ways we could improve on this chart. We could remove the time codes at the bottom, add a Y label and a chart title, insert dates with no orders to get a more consistent picture of when orders were placed, etc.

But our goal here wasn't to create a picture perfect chart. It was to use some quick programming to mine some insight out of a large data set. And we've learned a lot! We've learned:

- How much I spent on Amazon during this period.

- What my highest, lowest, and average order totals were.

- How much tax I paid, and the effective sales tax rate I paid.

- How my spending fluctuated over time

- On which days I spent the most money

And we did all of that with fewer than thirty total lines of code!

How to Take This Project Further

Want to go deeper? Here are some ways you might try to further expand this project depending on your interests:

- Look into other arguments to add to the plot code to further beautify the graph.

- Add another bar to each day for

'Tax Charged'to each day. - Break your spending down by month, or year, instead of day.

- Download your Items order report on Amazon and do some analysis on what Categories of items you buy most, or spend the most on. (This will require reworking some of your existing code since the Items report has a lot more columns).

- Find a way to get every day (even days with no orders) into your data set so that you can more easily visualize your spending over time.

- What other websites to you shop on? See if they allow you to access any of your data.

If you found yourself enjoying this tutorial, or if you think these skills could be valuable in your life or your career, you might always want to consider learning data skills in our Data Science path. The first two courses are totally free and will cover all the fundamentals of programming in Python.

Sign up for a free account and you'll also get a weekly email with cool resources for learning data skills and advancing your career.

Couldn't I Just Do This With Excel?

Certainly, plenty of what we did in this tutorial can also be done with a product like Excel or Google Sheets. And sometimes, working with a spreadsheet program is the fastest and easiest choice. However, there are a few important reasons why being able to do a bit of programming is often better than Excel for projects like this.

1. Coding is More Powerful

You probably know how to do some of the things we did in this tutorial, like find the sum of a column, in Excel. But it's possible to do much more complex analyses pretty simply using code.

Things like coditionals (if this happens, then do that) are possible in spreadhseet software, but it gets complicated confusing really quickly, whereas it's relatively straightforward in code. And more advanced types of analysis like many types of machine learning are straight-up impossible in spreadsheet software.

2. Coding Can Be Better For Big Data

In this tutorial, we were working with a pretty small data set, the kind that would be easy to work with as a spreadsheet. But your real Amazon order history, or your Fitbit data, or your company's sales records, or whatever other data you want to work with is likely to be bigger. A lot bigger.

Big data sets can get unwieldy, and even cause slowdowns and crashes, in spreadsheet software. The software, after all, has to represent every single point of data visually as well as tracking all of the other things you're doing.

Code, on the other hand, is performing one operation at a time, and only displaying the outputs that you specifically request visually. This allows it to deal with large data sets with much more speed and more stability.

3. Coding Can Be Better For Working With Multiple Files

Imagine that every month you have to take a number of different reports in Excel and combine them into a single report for analysis. That's a real problem one Dataquest student had, and he describes the process of trying to merge dozens of different Excel sheets into a single analysis every month as a painful nightmare.

Combining and cleaning multiple data sets is both simpler and often quicker with code. Rather than opening dozens of windows and copy-pasting the data one by one into a master sheet, with programming you can write some code that accesses and combines all of the files automatically. The Dataquest student mentioned above, for example, turned what used to be a week-long data synthesis and analysis task in Excel into a task that took just a few minutes in Python.

And his time investment in writing that code will pay dividends as long as he holds that job because...

4. Coding is More Repeatable

Once you've written the code to import, clean, and analyze data from a particular source, it will work for any data that's input in the same format.

If you wanted to perform the analysis from this tutorial on your Amazon data every month, for example, all you'd have to do would be download that month's data each month, change the filename that read_csv() is reading in that first code snippet, and then run all the code cells, and all of the analysis we just did on this data set would be performed on your new data set automatically.

5. Coding is More Transparent

While you can do complex calculations in spreadsheet software, those calculations are usually hidden in cells. If you're given someone else's Excel file that's doing some complex analysis, it could take you quite a while to figure out what's actually going on. In a spreadsheet, you can see all the data, but the operations and calculations being performed on it are something you usually have to search for cell by cell.

Code is pretty much the opposite: most of the data itself isn't visible, but all of the operations and calculations being performed on it are plainly apparent, as is the sequence in which they're executed. Of course, you do need to learn the basic syntax to be able to read the code, but once you have that down it's much easier to see at a glance exactly what's happening in the analysis.

This also makes it easier to catch errors like having a + instead of a - somewhere, where as an error like that hidden in a single cell of a large spreadsheet might go unnoticed for a long time.

6. Accessing Data is Easier With Code

The data you want to analyze isn't always going to come in spreadsheet form. A lot of apps and services will only let you access data via APIs, which you'll need coding skills to access.

Or you might want to collect your own data set by scraping data from a website — again, this is something that you simply can't do with spreadsheet software (and copy-pasting data points into Excel one-by-one is exhausting).

7. Coding is More Fun

Maybe you've never thought of yourself as a programmer before, but if you got through the end of this tutorial, you've already written and run more code than most people ever will. It feels good, doesn't it?

Writing code can definitely be frustrating at times. But when you get everything working as intended, it's a really satisfying feeling. And the amount of time you can save for yourself by automating repetitive analysis projects is astounding.

If you want to learn more, our other Python tutorials are a good resource, and our interactive data science courses can take you from zero to job-ready in a variety of data and programming skill areas.