The 17 Best Free Tools for Data Science

One of the best things about working in the data science industry is that it's full of free tools. The data science community is, by and large, quite open and giving, and a lot of the tools that professional data analysts and data scientists use every day are completely free.

If you're just getting started, though, the sheer number of resources available to you can be overwhelming. So rather than bury you in a list of open-source goodies, we've picked out some of our absolute favorites: the best free tools for data science using Python, R, and SQL.

Languages

It's easy to forget because they're so ubiquitous, but programming languages are truly the best free tools for data science work. Simply learning one of these languages puts tremendous analytical power at your fingertips. And the three we've listed here — the three most commonly-used languages in data science — are all completely free to use.

For post people, languages are the biggest choice they'll make when selecting data science tools. The three best languages are:

- R

- Python

- SQL

You'll find hundreds of articles that try to tease apart which of Python and R are better for data science. We've written our own article comparing Python versus R on more objective grounds — how each language handles common data science tasks.

The reality is that they're both great options each with their respective strengths, which we'll outline below. If you're just starting out, it's better to pick either and start learning, rather than wasting time trying to work out which is best.

SQL, on the other hand, is more complementary to both Python and R. It might not be the first language you learn, but you will need to learn it.

1. R

The R programming language was initially created in the mid-90s. R is the statistical language of choice throughout academia and has a reputation for being easy to learn, especially for those who've never used a programming language before.

A key benefit of the R language is that it was designed primarily for statistical computing, so many of the key features that data scientists need are built-in.

R also has a strong ecosystem of packages that allow for extended capabilities. There are several R packages which are considered by many to be essential if you're working with data. We'll outline those later in the “R Packages” section.

2. Python

Like R, Python was also created in the 90s. But unlike R, Python is a general-purpose programming language. It's often used for web development, and it is one of the most popular overall programming languages.

Using Python for data science work started to become popular in the mid-to-late '00s after specialized libraries (analogous to R packages) emerged that provided better functionality for working with data. Over the last decade, Python's use as a data science language has grown tremendously, and it's now the most popular language for data science by some metrics.

One of the key benefits of Python is that because it's a general-purpose language, it is easier to perform general tasks that intersect with your data work. Similarly, if you learn Python and later decide that software development is a better fit for you than data science, a lot of what you've learned is transferable.

3. SQL

SQL is complimentary language to Python and R — often it will be the second language someone learns if they're looking to get into data science. SQL is a language used to interact with data stored in databases.

Because most of the world's data is stored in databases, SQL is an incredibly valuable language to learn. It's common for data scientists to use SQL to retrieve data that they will then clean and analyze using Python or R.

Many companies also use SQL as a “first-class” analysis language, using tools that allow visualizations and reports to be built directly from the results of SQL queries.

R Packages

R has a thriving ecosystem of packages that add functionality to the core R language. These packages are distributed by CRAN and can be downloaded using R syntax (as opposed to Python that uses separate package managers). The packages we list below are some of the most commonly used and popular packages for data science in R.

4. Tidyverse

Technically, tidyverse is a collection of R packages, but we include it here together because it is the most commonly used set of packages for data science in R. Key packages in the collection include dplr for data manipulation, readr for importing data, ggplot2 for data visualization, and many more.

The tidyverse packages have an opinionated design philosophy that revolves around “tidy data” — data with a consistent form that makes analysis (particularly with tidyverse packages) easier.

The popularity of the tidyverse has grown to the point that, for many, the idea of ”working in R” really means working with the tidyverse in R.

5. ggplot2

The ggplot2 package allows you to create data visualizations in R. Even though ggplot2 is part of the tidyverse collection, it predates the collection and is important enough to mention is its own.

ggplot2 is popular because it allows you to create professional-looking visualizations fast using easy-to-understand syntax.

R includes plotting functionality built-in, but the ggplot package is generally considered superior and easier to use and is the number one R package for data visualization.

6. R Markdown

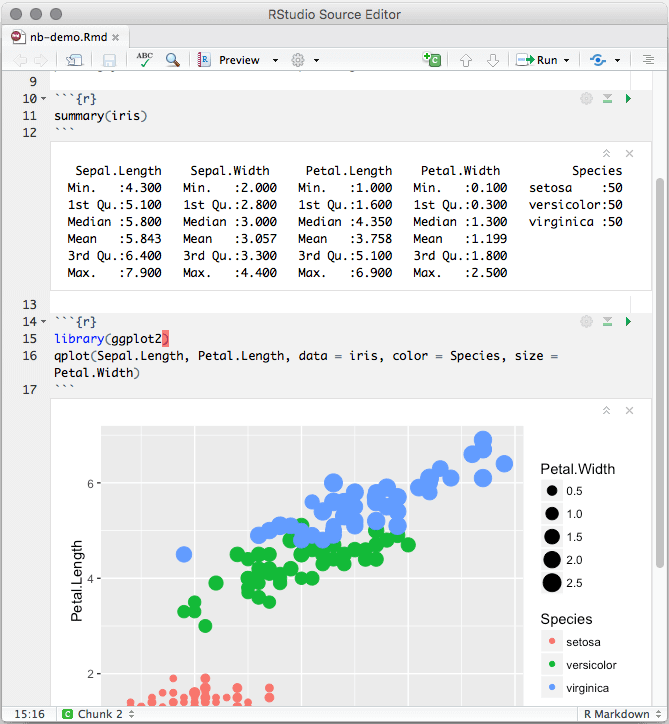

The R Markdown package facilitates the creation of reports using R. R Markdown documents are text files that contain code snippets interleaved with markdown text.

R Markdown documents are often edited in a notebook interface that allows the creation of code and text side by side. The notebook interface allows the code to be executed and the output of the code to be seen inline with the text.

An R Markdown notebook in action

R Markdown documents can be rendered into many versatile formats including HTML, PDF, Microsoft Word, books, and more!

7. Shiny

The Shiny package allows you to build interactive web apps using R. You can build functionality that allows people to interact with your data, analysis, and visualizations as a web page.

Shiny is particularly powerful because it removes the need for web development skills and knowledge when creating apps and allows you to focus on your data.

8. mlr

The mlr package provides a standard set of syntax and features that allow you to work with machine learning algorithms in R. While R has built-in machine learning capabilities, they are cumbersome to work with. Mlr provides an easier interface so you can focus on training your models.

mlr contains classification, regression, and clustering analysis methods as well as countless other related capabilities.

Python Libraries

Like R, Python also has a thriving package ecosystem, although Python packages are often called libraries.

Unlike R, Python's primary purpose is not as a data science language, so use of data-focused libraries like pandas is more or less mandatory for working with data in Python.

Python packages can be downloaded from PyPI (the Python Package Index) using pip, a tool that comes with Python but is external to the Python coding environment.

(A complementary alternative to pip is the conda package manager, which we'll talk about later on.)

9. pandas

The pandas library is built for cleaning, manipulating, transforming and visualizing data in Python. Although it's a single package, its closest analog in R is the tidyverse collection.

In addition to offering a lot of convenience, pandas is also often faster than pure Python for working with data. Like R, pandas takes advantage of vectorization, which speeds up code execution.

10. NumPy

NumPy is a fundamental Python library that provides functionality for scientific computing. NumPy provides some of the core logic that pandas is built upon. Usually, most data scientists will work with pandas, but knowing NumPy is important as it allows you to access some of the core functionality when you need to.

11. Matplotlib

The Matplotlib library is a powerful plotting library for Python. Data scientists often use the Pyplot module from the library, which provides a standard interface for plotting data.

The plotting functionality that is included in pandas calls Matplotlib under the hood, so understanding matplotlib helps with customizing plots you make in pandas.

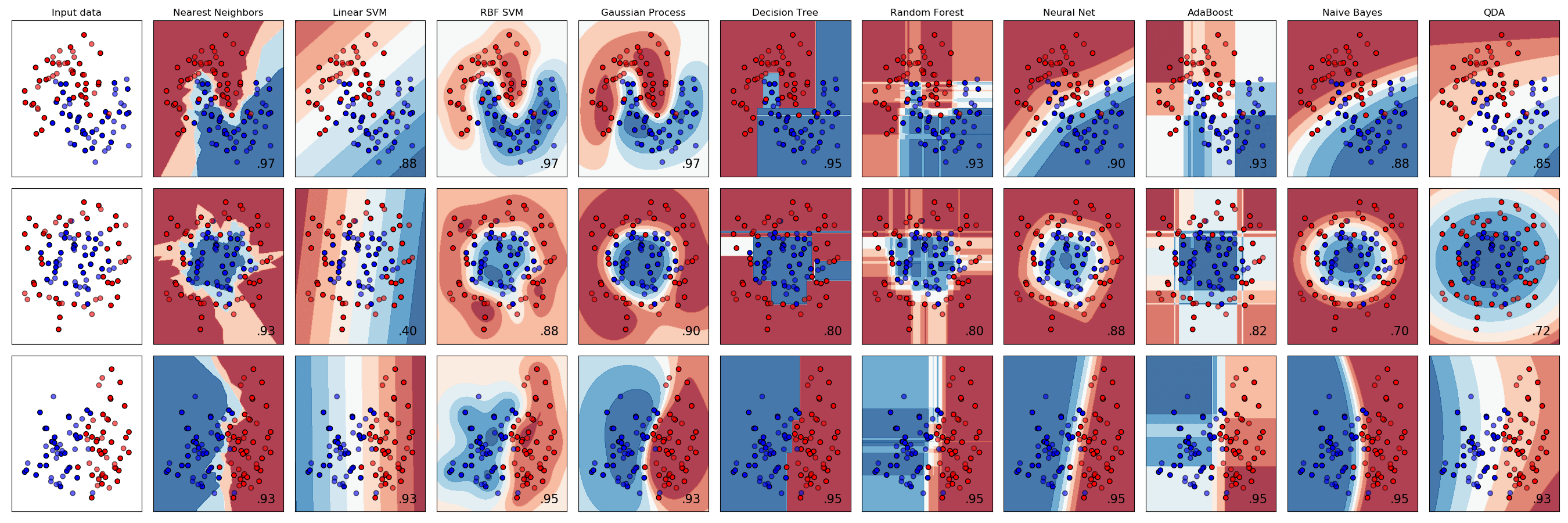

12. Scikit-Learn

Scikit-learn is the most popular machine learning library for Python. The library provides a set of tools built on NumPy and Matplotlib for that allow for the preparation and training of machine learning models.

Plots created with the scikit-learn library

Available model types include classification, regression, clustering, and dimensionality reduction.

13. Tensorflow

Tensorflow is a Python library originally developed by Google that provides an interface and framework for working with neural networks and deep learning.

Tensorflow is ideal for tasks where deep learning excels, such as computer vision, natural language processing, audio/video recognition, and more.

Software

So far, we've looked at the best languages for data science and the best packages for two of those languages. (As a query language, SQL is a bit different and doesn't use "packages" in the same sense).

Next, we'll look at some software tools that are useful for data science work. These aren't all open-source, but they're free for anyone to use, and if you work with data on a regular basis they can be big time-savers.

14. Google Sheets

If this were not a list of free tools, then undoubtedly Microsoft Excel would be at the top of this list. The ubiquitous spreadsheet software makes it quick and easy to work with data in a visual way, and is used by millions of people around the world. Although the software isn't free, this Excel cheat sheet is!

Google's Excel clone has all of the core functionality of Excel, and is available free to anyone with a Google account.

15. RStudio Desktop

RStudio Desktop is the most popular environment for working with R. It includes a code editor, an R console, notebooks, tools for plotting, debugging, and more.

Additionally, Rstudio (the company who make Rstudio Desktop) are at the core of modern R development, employing the developers of the tidyverse, shiny, and other important R packages.

16. Jupyter Notebook

Jupyter Notebook is the most popular environment for working with Python for data science. Similar to R Markdown, Jupyter notebooks allow you to combine code, text, and plots in a single document which makes data work easy.

Like RMarkdown, Jupyter notebooks can be exported in a number of formats including HTML, PDF, and more.

Dataquest's guided Python data science projects almost all task students with building projects in Jupyter Notebooks, since that's what working data analysts and scientists generally do in real-world work.

17. Anaconda

Anaconda is a distribution of Python designed specifically to help you get the scientific Python tools installed. Before Anaconda, the only option was to install Python by itself, and then install packages like NumPy, pandas, Matplotlib one by one. That which wasn't always a straightforward process, and it was often difficult for new learners.

Anaconda includes all of the main packages needed for data science in one easy install, which saves time and allows you to get started quickly. It also has Jupyter Notebooks built-in, and makes starting a new data science project easily accessible from a launcher window. It is the recommended way to get started using Python for data science.

Anaconda also includes the conda package manager, which can be used as an alternative to pip to install Python packages (although you can also use pip if you prefer).

Learning Data Science for Free

Above, we've listed some of the best free tools for data science. But of course, most of these tools are only truly useful once you've learned the skills required to wield them effectively.

Thankfully, there are lots of great free resources out there for learning data science, too! We've highlighted some of the best free books on data science, and we've published a lot of free Python tutorials and R tutorials to make learning easier.

The best way to learn is by actually writing code, though. Our interactive, in-browser courses will get you writing real code and working with real data quickly. And the first two full courses — that's hours and hours of learning that covers all of the fundamentals and more — in each of our learning paths is free.

We don't use videos, multiple choice questions, or fill-in-the-blanks. Instead, we challenge you to apply everything you're learning in real, functional code right from the start. And we'll check your answers automatically to make sure you've gotten everything right.